Edge-Based, Machine-Learning-Enabled Materials Exploration Pipeline

New Argonne machine learning models will open the possibility for real-time experiment feedback critical to understanding materials.

March 10, 2023 | Argonne National Lab - Photon Sciences

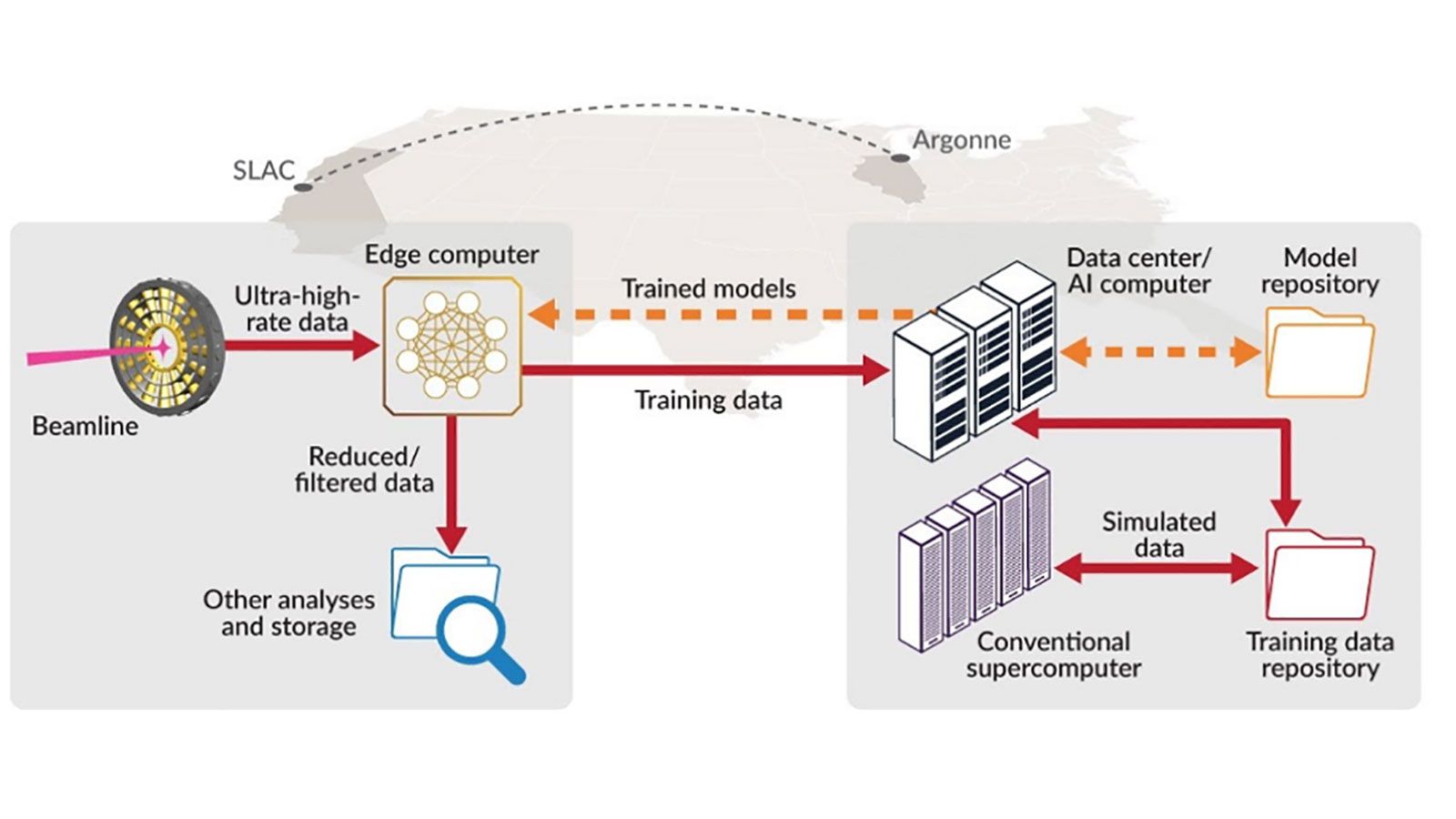

Researchers from Argonne and SLAC have demonstrated the ability to use beamline data from live experiments to train machine learning (ML) models on remote supercomputers and deploy the results on edge devices at beamlines, enabling near-real-time data processing.

This workflow demonstrates the feasibility of using powerful, yet remote, data center systems to enable rapid processing of beamline data. The process works by (re)training deep neural networks in near real-time and deploying the (re)trained models for execution on beamline edge devices, resulting in near-instantaneous feedback for experimenters.

- Diffraction data generated at instruments at the Advanced Photon Source (APS) and the Linac Coherent Light Source (LCLS) are streamed over high-bandwidth networks to the Argonne Leadership Computing Facility (ALCF).

- The Cerebras and SambaNova computing systems at the ALCF rapidly (re)train deep neural network models, which are (re)deployed on edge devices at the APS and LCLS.

- Using this approach, the ML models for Bragg peaks analysis in High-Energy Diffraction Microscopy experiments are trained in less than 3 minutes, as opposed to tens of minutes using a local system.

- Globus Flow service is used to manage the workflow, Globus Transfer service to train model movement, and funcX to abstract various computing resource (e.g., supercomputer, SambaNova, and Cerebras).