Scientists at Argonne Leverage Globus in "Sim-City" Modelling to Battle COVID-19

October 19, 2020 | Susan Tussy

Image: Courtesy of Argonne National Laboratory

Scientists at Argonne National Lab have deployed some of the Nation’s most powerful compute resources, and developed large-scale epidemiological models to provide policy makers with model forecasts and scenario analyses around the impact of various mitigation measures in the effort to battle and contain COVID-19.

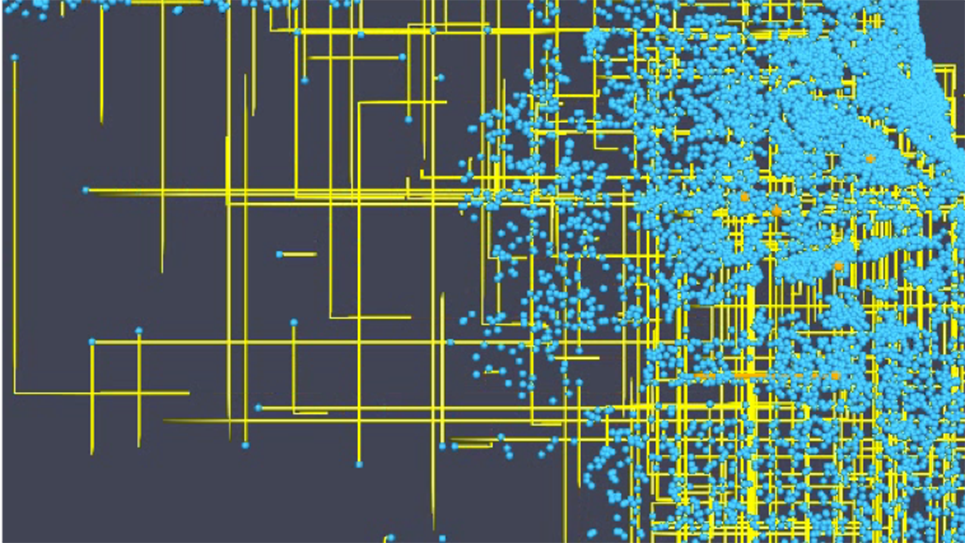

The group at Argonne has developed CityCOVID, a detailed agent-based model that represents the 2.7 million residents of Chicago in terms of people (behaviors and social interactions), places (including 1.2 million unique geo-locations such as households, schools, workplaces, and hospitals), and hourly activity schedules. These agents are not real individuals. Rather, they are representations of individuals based on census data, and represent Chicago’s socio-economic makeup (age, home/school/work location, etc.). The detailed modeling approach is more computationally demanding than other types of modeling methods being applied to COVID-19, such as statistical models, and compartmental models. However, the approach allows for the modeling of specific and realistic non-pharmaceutical interventions—such as imposing restrictions on specific types of businesses, or detailed school attendance models—closely resembling those being considered by public health officials. Initially the questions the researchers were asked centered around the impact of shutting down areas to slow the spread. Then, questions arose around re-opening, and now policy makers are shifting their attention to questions around vaccines and their strategic, efficient, and equitable distribution.

In order to perform this sort of granular analysis the researchers are using Argonne’s supercomputer, Theta. The group employs machine learning methods to guide large numbers of CityCOVID runs, transforming Theta into an in silico laboratory, where each experiment produces 500GB to 1TB of simulation outputs. Model outputs are archived to Petrel, which is a storage and data service run by Argonne and accessible via Globus, and then transferred to the Argonne LCRC cluster for post processing. Once the results have been distilled, some of the data is shared with collaborators by leveraging one of the Globus storage connectors. The raw outputs are also made available via curated access to Petrel, leveraging the fine-grained access control mechanisms in Globus. While many of these steps require human intervention, the goal is to eventually automate much of the workflow with the platform services offered by Globus.

“What we know about COVID-19 and the SARS-CoV-2 virus has evolved over the course of the pandemic. Globus technologies have enabled the rapid simulation, analysis, and data distribution cycle that is needed to support decision making during an ongoing public health crisis.”

– Jonathan Ozik, Computational Scientist, Argonne National Laboratory

Previous stories on Argonne Researchers’ Modelling Efforts on COVID-19 can be found here:

Researchers use ALCF resources to model the spread of COVID-19

Argonne Uses Supercomputer to Model Coronavirus Spread in Chicago